Acute gastrointestinal disorders are some of the most frequent problems evaluated by ED physicians. Complaints of diarrhea account for almost 5% of visits to the emergency departments (Bitterman, 1988). Although the disease entity is extremely prevalent and current evidence on the subject is nothing short of “voluminous,” practice differences among ED physicians in its evaluation and management are as varied and inconsistent as the stools themselves.

1. When do you send stool cultures, stool ovum and parasites, and/or fecal WBC? How do you use the results in diagnosis and management?

Stool evaluation of fecal leukocytes (WBC) and occult blood is sent in many ED’s as a positive result has traditionally been thought to be predictive of either an inflammatory or infectious etiology of the diarrhea. Fecal WBC’s and RBC’s are generally found in stool infected with invasive bacterial pathogens such as Salmonella, Shigella, Campylobacter, Enteroinvasive E-coli, Enterohemorrhagic E-coli ( E-coli 0157-H7), but also in stools of patients with inflammatory disorders such as Crohn’s disease, Ulcerative Colitis, and pseudomembranous colitis. Studies show a sensitivity for predicting bacterial infection for fecal WBC ranging from 40% (Chitkara, 1996) to 73% (Thielman, 2004). Fecal WBC testing also appears to have a specificity of approximately 85% (Thielman, 2004) for bacterial pathogens.

Many studies have demonstrated that fecal occult blood testing (FOBT) is nearly equivalent in sensitivity to fecal WBC in predicting the presence of an invasive bacterial pathogen. One large, well-designed study of 1040 patients with acute diarrhea found that a negative FOBT had a negative predictive value of 87% for invasive bacterial pathogens (McNeely, 1996). Another large study of 446 children demonstrated an 88% sensitivity for the combination of bloody diarrhea by history, positive fecal WBC and positive FOBT for predicting a bacterial pathogen (Huicho, 1993).

Underpinning the problems inherent in many of these studies is that the gold standard for determining sensitivities, specificities and predictive values was often stool cultures, which themselves have not been shown to be the greatest of tests (as we will discuss). What do we do with these results then, you ask? Well, there is no consensus on when to order fecal WBC or occult RBC’s, however, guidelines by the Infectious Disease Society of America (IDSA) and the American Association of Gastroenterology (AAG) recommend a selective approach since most run-of-the-mill cases of infectious diarrhea are viral in etiology, self-limiting and do not require any testing. They recommend testing patients at high risk for invasive bacterial pathogens (fever > 101.3, severe, or persistent diarrhea (> 7 days), severe abdominal pain, bloody diarrhea, the immunocompromised, elderly or systemically ill patients) (Guerrant, 2001).

Stool cultures, though frequently ordered in the ED, are notoriously poor at identifying bacterial pathogens as a result of their relatively low yield. In six studies conducted between 1980 and 1997 only 1.5%-5.6% of cases grew positive stool cultures. This results in a cost of about $1000 for each positive culture (Guerrant, 2001). Similar low yields have been duplicated by other studies as well. Most people are also unaware that routine stool cultures in most laboratories don’t test for ALL possible pathogens, but primarily identify Shigella, Campylobacter, and Salmonella only. All other bacteria, including E-coli O157-H7 usually require special requests. As a result, most guidelines also recommend only sending stool cultures in high-risk patients (as denoted above), plus those with positive fecal WBC/occult blood, or those patients being admitted for their diarrhea. The vast majority of patients will not require stool cultures (Dupont, 2014).

Lastly, in developed countries, routine use of stool ova and parasite testing is rarely indicated (Siegel, 1990). Primarily it is used to help identify diarrhea caused by parasites including Giardia, Entamoeba, Cyclospora and Cryptosporidium. As these pathogens are relatively rare in the US, only consider sending these tests in travelers recently returning from Russia (Giardia and Cryptosporidium) or the mountainous regions of North America (Giardia), in AIDS-associated diarrhea (Cryptosporidium), those exposed to infants at a daycare center (Giardia and Cryptosporidium), or longstanding diarrhea not responsive to antibiotic therapy. If you clinically suspect any of these pathogens be sure to send multiple samples for stool ova and parasites to improve the yield, as parasite excretion may be intermittent.

Bottom line: It is prudent to order fecal WBCs as a screening test in high risk patients (denoted above), as it may help you determine the presence of an invasive bacterial pathogen, but in these patients an FOBT may be easier, cheaper, and just as good. Stool cultures should be sent if fecal WBC/RBC testing is positive, or if patients are being admitted for their diarrhea. Stool O&P is rarely indicated or cost-effective in the US except for very few special circumstances (denoted above).

2. When do you get bloodwork? When do you pursue imaging?

Most patients who present to the ED with acute diarrhea will have a self-limited disease course. However, many physicians often reflexively order a set of basic labs in these patients to check for any “electrolyte disturbances” from the presumed water loss. Many studies have shown that routine blood work in these patients is unnecessary. In a study by Olshaker, et al., 281 adult patients with acute gastroenteritis were retrospectively reviewed and only 1% of patients were found to have a clinically significant electrolyte abnormality that required treatment or affected disposition. None of the patients with acute gastroenteritis alone had electrolyte abnormalities. They also found that the time spent in the ED was 3-4 times longer for those patients who had electrolytes ordered (Olshaker, 1989).

Routine CBC is also unnecessary in most patients with acute diarrhea as an elevated WBC is non-specific. A hemoglobin level may be appropriate in cases of large amounts of bloody diarrhea. A platelet count may be helpful in children with bloody diarrhea in which you are concerned about Hemolytic Uremic Syndrome (HUS) as a further complication, but otherwise these tests are largely unhelpful.

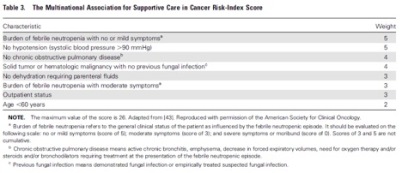

So does this mean we should never order routine lab work on anyone with acute diarrhea? Not necessarily. The above description and evidence is primarily true for cases with a self-limited diarrhea that is likely due to a viral or non-invasive bacterial pathogen (the majority of patients seen in the ED). In the smaller subgroup of patients with risk-factors for invasive bacteria (i.e., high fever, severe or persistent diarrhea (> 7 days), severe abdominal pain, bloody diarrhea, positive fecal WBC or fecal occult blood, the immunocompromised, elderly, or systemically ill/toxic appearing patients), obtain at least a CBC and BMP to assist with your evaluation and treat any electrolyte abnormalities that may be present (Dupont, 2014).

Similarly, most patients seen in the ED with acute diarrhea do not need any imaging to be performed. It is important, however, to expand your differential diagnosis outside of infectious etiologies of diarrhea to identify the subset of patients at risk for other pathologies for whom further imaging may be warranted.

For example, patients with appendicitis may also present with diarrhea. Usually vomiting and diarrhea precede abdominal pain in infectious diarrhea, whereas vomiting often follows abdominal pain in patients with appendicitis. In a study of 181 children < 13yrs old who were eventually discovered to have appendicitis, 27% were initially misdiagnosed, and many of those children presented with diarrhea as an initial symptom (Rothrock, 1991). Ischemic bowel disease should also be on the differential diagnosis in elderly patients with severe abdominal pain and a history of vascular disease as these patients may also present with occasional diarrhea and bloating (Tabrez, 2001). If ischemic bowel disease is being considered, a contrasted CT of the abdomen and pelvis should be ordered. Furthermore, small bowel obstruction and diverticulitis can often present with diarrhea, but may not be diagnosed unless formal imaging is obtained.

Be extra cautious in evaluating elderly patients with diarrhea and abdominal pain as these patients tend to have more serious, often surgical, illnesses that present atypically or go unrecognized longer (Hendrickson, 2003).

Bottom line: most patients seen in the ED with acute diarrhea require no “routine” blood work unless the patient has high-risk features. Imaging is also usually not necessary unless you are considering other diagnoses including appendicitis, mesenteric ischemia, small bowel obstruction, and diverticulitis.

3. Which patients do you treat with antibiotics?

Whether or not to prescribe antibiotics and to which patients is one of the most controversial and most discussed aspects of the management of diarrhea. Analyzing all the evidence currently available is enough to cause one to have diarrhea in and of itself, but fear not. Let’s break it down in pieces . . .

Why give antibiotics in the first place, you ask, if many of these disease processes are self-limited? In various studies, antibiotics appear to decrease the length of the diarrhea symptoms by about 24-48 hrs regardless of whether the diarrhea was guiac positive, fecal WBC positive, or had positive stool cultures (Guerrant, 2001; Dryden, 1996; Wistrom 1992). The moderately to severely ill seem to benefit more from antibiotics. Why would antibiotics decrease symptoms in culture negative stools? Some believe the antibiotics are eradicating bacterial pathogens that stool cultures were unable to detect. Traditionally it was thought that antibiotics may not be beneficial in mild-moderate diarrhea, due to their tendency to prolong the carrier state, especially amongst those infected with Salmonella. However, newer studies show and that carrier rates are approximately equivalent in those treated with or without antibiotics (Dryden, 1996).

So now that we understand why we may give antibiotics, the question becomes who we should give them to? Many experts argue that most patients, regardless of symptoms and lab test results, don’t need antibiotics since most acute diarrheal illnesses are self-limited. Additionally, further prescription of antibiotics will lead to increased drug resistance and side-effects. Other guidelines, including those from the IDSA and AAG, provide a more conservative approach. They state patients should receive antibiotics if they are presenting with symptoms of traveler’s diarrhea, as immediate treatment can reduce symptom duration by 2-3 days (Guerrant, 2001). They further recommend antibiotic therapy in those patients with high fever (>101.3), history suspicious of a moderate-severe bacterial infection, guiac positive stools or positive fecal WBC (Guerrant, 2001). Some criticize the IDSA guidelines for relying too heavily on stool testing to decide whether or not to give antibiotics.

Just as important as knowing which patient to give antibiotics to is knowing which patients to be cautious about giving antibiotics. In general, antibiotics are not advised for the treatment of diarrhea in most pediatric patients. The cornerstone of treatment in pediatric patients is fluid replacement. Inadequate fluid replacement leads to the 9% of hospitalizations in children < 5 yrs of age caused by diarrhea (Cicirello, 1994). Caution is also advised in prescribing antibiotics in patients with grossly bloody diarrhea. This is because one of the common causes of grossly bloody diarrhea is Enterohemorrhagic E-coli (AKA E-coli 0157-H7). Various studies (including one published in the New England Journal of Medicine in 2000) demonstrated higher risk of HUS in pediatric patients with EHEC who were treated with antibiotics (Wong, 2000). There is also concern that elderly patients with EHEC may develop TTP if treated with antibiotics.

If you decide to give antibiotics to a patient with an acute diarrheal illness, which antibiotics should you give? Most studies and current guidelines recommend ciprofloxacin to help eradicate acute bacterial pathogens. Two basic regimens exist, either a one-time dose of ciprofloxacin 1gm or a regimen of ciprofloxacin 500mg twice/day x 3 days. Some regimens use macrolides, as fluoroquinolones will not be effective in cases of Campylobacter (Dupont, 2014).

Bottom line: In most cases of watery diarrhea, no antibiotics are needed as the disease is usually self-limiting. When there is concern for invasive disease (positive fecal WBCs or RBCs, or young, adult, healthy patients with grossly bloody stools), it may be reasonable to prescribe ciprofloxacin 500mg BID x 3 days to help reduce symptoms by 24-48 hours (although many sources argue that this is unnecessary). Also, be cautious in giving antibiotics to pediatric and elderly patients with grossly bloody diarrhea as HUS and TTP are concerns.

4. What other medications do you use? Loperamide, Lomotil, Pepto? What about probiotics?

Loperamide (Imodium) is a peripheral opioid receptor agonist that acts on the mu-opioid receptors in the myenteric plexus of the large intestine without affecting the mu-receptors in the CNS. It works by slowing gastrointestinal motility, thereby allowing more time for fluid and electrolytes to be absorbed from the fecal material. Loperamide is generally considered to be safe in most acute infectious diarrhea in patients who are afebrile and have non-bloody diarrhea and those individuals with chronic diarrhea from inflammatory bowel disease (Gore, 2003).

In those with more severe illnesses (immunocompromised, bloody diarrhea, fever > 101.3), some experts believe the use of Loperamide will allow the invasive bacteria to remain in the gut for a longer period of time and potentially worsen the acute diarrheal illness. However, there is evidence that supports the use of Loperamide in sicker patients in combination with antibiotics. In two studies, one in Thailand on patients with dysentery and another amongst US soldiers with traveler’s diarrhea, the use of Loperamide effectively reduced the number of loose bowel movements compared to placebo when given in adjunct with ciprofloxacin (Petrucelli, 1992; Murphy 1993).

Loperamide may increase the risk of HUS in pediatric patients (Guerrant, 2001; Cimolai, 1990) and many guidelines advise against the use of anti-motility agents in pediatric patients.

Lomotil (diphenoxylate/atropine) is a combined opiate-agonist (diphenoxylate) and anticholinergic (atropine) agent that is also available as an adjunct for symptomatic treatment of diarrhea. The diphenoxylate component acts on the mu-receptors of the gut wall in a similar fashion to Loperamide, however, its mu effects are not restricted to the periphery and may cross into the CNS. As a result, atropine is combined in the medication to discourage overdose. From the drug description alone, it can be seen that this medication may be more dangerous and habit-forming than Loperamide in treating the acute symptoms of diarrhea. Diphenoxylate has not been studied in any randomized clinical trials and is not recommended by many experts for symptomatic treatment of acute diarrhea.

Bismuth subsalicylate, sold most commonly under the brand name Pepto-Bismol functions as an anti-secretory, anti motility agent with some weak but present bactericidal properties. As it is a salicylate, its toxicologic considerations, especially in pediatric patients, require extreme caution and likely avoidance in small children out of concern for at home dosing misadventures. Very little rigorous study of Pepto exists, with some volunteer reports of its use in traveler’s diarrhea in military personnel decreasing symptoms subjectively (Putnam, 2006). One double-blinded randomized study of Bangladeshi children aged 4-36 months found a modest improvement in acute diarrheal illness (Chowdhury, 2001). AN open label study in volunteers found loperamide to be faster and more effective than Pepto in adults (Dupont, 1990). Pepto, though, may be particularly useful in cases of norovirus, a common cause of acute diarrhea (Pitz, 2015). If toxicity is avoided in dosing, there is little downside in including this in a patient’s antidiarrheal armamentarium.

Probiotics are live organisms found in a variety of foodstuffs that have been used to help colonize the intestine with “good bacteria” to prevent or treat both infectious and antibiotic associated diarrhea. Lactobacillus is one of the most widely available and studied of these probiotics. In the Cochrane Review of over 23 studies involving over 1900 adults and children, probiotics were found to reduce the overall risk of having diarrhea at 3 days by approximately 35% and reduced the duration of the diarrhea by approximately 30 hours (Allen, 2004). Multiple other studies show similar results while also demonstrating a good safety profile.

In regards to the use of probiotics in the prevention of antibiotic-associated diarrhea, the largest meta-analyses was conducted in 2012 (62 studies, 11,000 patients). A majority of the included studies used Lactobacillus as the probiotic and found a 42% lower risk of developing antibiotic associated diarrhea than control groups (RR 0.58, 95% CI 0.5-0.68) with a number needed to treat (NNT) of 13 to prevent one case of antibiotic associated diarrhea (Hempel, 2012).

Bottom line: Loperamide (Imodium) may be useful and safe in most cases of acute diarrhea, however, some caution should be advised in the severely ill and in and those with bloody diarrhea unless an antibiotic is concurrently prescribed. Loperamide should be avoided in pediatric patients. Lomotil is not recommended for symptomatic relief. Probiotics (specifically Lactobacillus) are showing promising evidence for their use in the prevention and treatment of both infectious and antibiotic associated diarrhea.

Author: Bhandari Editors: Swaminathan, Bryant